Software Factory

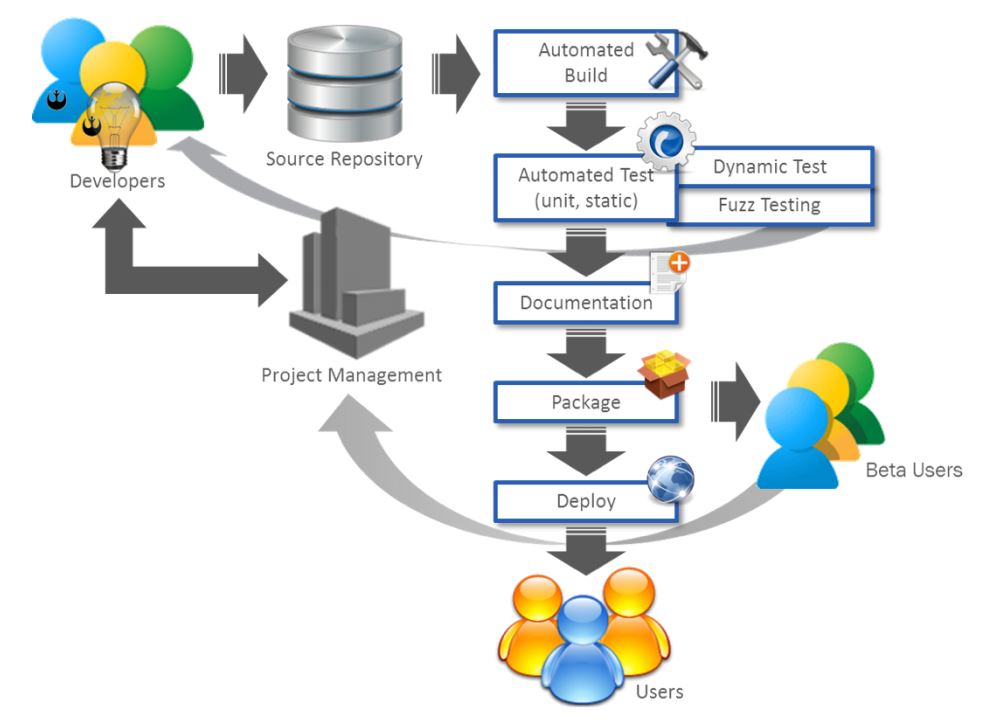

The software factory is where low-cost, cloud-based computing is used to assemble a set of tools that enable the developers, users, and management to work together on a daily tempo. The goal is to ensure the code meets requirements by building and testing the application automatically every day and feeding back any issues to the developer responsible for the code. A source code repository archives current and past versions of the application while each developer works on a local copy of the code. After attaining a stable version, it is uploaded to the repository along with extensive tests and test data, and documentation listing the added features and resolved issues. In most organizations, code is peer reviewed prior to the upload. Peer review is especially useful for new members of the team, allowing them to learn the nuances of the software system conventions.

Once the code is uploaded, a style checker ensures there are no violations of coding conventions and then the software system is built. For interpreted languages such as Python or Swift, the build process involves static testing (i.e., no undeclared variable, no variables being called after the variable has been discarded) and syntax checking. For compiled languages, such as C, a compilation of the source code to executable code is involved. Individual modules then go through unit testing, which validates resolution of previously identified issues as well as compatibility with required functionality. In a new project, the first software written is often the unit tests and, in fact, comprehensive unit tests can offer the best insight into function. The full build is dynamically tested by executing use-scenarios identified as edge cases. Fuzz testing is also used — giving random inputs of all allowed values — to look for instances where unexpected behavior is displayed. Any issues identified during the build and test process are communicated to the programmer and errors receive attention quickly.

The build process also generates documentation. Typical tool chains allow the programmer to incorporate documentation directly into source code. The documentation can then be extracted and the documents assembled. Next, the full system is packaged into a container allowing rapid, reliable deployment to users. Users and automated monitoring provide feedback to the development team through the project management software, providing a channel to communicate desired feature additions and modifications as well as prioritized bug reports.”

The Defense Science Board recommends a key evaluation criterion in the source selection process should be the efficacy of the offeror’s software factory.

Software Factory Source Selection Criteria Suggestions

- Configuration management software (e.g., Puppet, Chef, Ansible)

- Continuous integration (build and test) systems (e.g., Travis CI for hosted service, Jenkins for open source application)

- Scripts and code used to release software (e.g., Python scripts)

- Servers, network, or other infrastructure that support release tools

- Software and tools to support developer self-service operations (New Relic for application performance over time, diagnostic tools, monitoring)

- External test frameworks (e.g., Jersey Test Framework, TestPlant/eggPlant)

- External operational monitoring and log mining tools (e.g., Splunk, Elasticsearch + Logstash + Kibana (ELK) Stack)

- Source code repositories (e.g., Github for hosted service, GitLab for open source application)

- Issue tracking systems (e.g., JIRA, Trello, GitHub)

- Container driven tools (e.g., Docker, Elastic Container Service (Amazon Web Services (AWS)), Kubernetes)

- Requirements management (e.g., DOORS (Dynamic Object Oriented Requirements System), Blueprint)

- Infrastructure and cloud providers (e.g., AWS, Rackspace, Azure, Red Hat OpenShift, Pivotal Cloud Foundry)

- Integrated development environment (IDE) DevOps process

DoD Software Factories